Low broadcast latency has become a mandatory requirement in any tenders and competitions for building of head-end stations and CDNs. Previously, such criteria were applied only to sports broadcasting, but now operators require low latency from broadcast equipment suppliers for every sphere: broadcasting news, concerts, performances, interviews, talk shows, debates, e-sports and gambling.

Latency in general terms is the time difference between the time when a particular video frame is captured by a device (camera, playout, encoder, etc.) and the time when this frame is played on the display of an end user.

Low latency should not reduce quality of signal transmission, which means that minimal buffering is needed when encoding and multiplexing while maintaining a smooth and clear picture on the screen of any device. Another prerequisite is guaranteed delivery: all lost packets should be recovered and transmission on open networks should not cause any problems.

More and more services are migrating to the cloud to save on rented premises, electricity and the cost of hardware. This increases the requirements for low latency with high RTT (Round Trip Time). This is especially true when transmitting high bitrates during the broadcasting of HD and UHD videos – for example, if the cloud server is located in the USA and the content consumer is in Europe.

In this review, we will analyze current market offers in terms of low-latency broadcasting.

UDP

Probably the first technology that was widely used in modern television broadcasting and associated with the term "low latency" was multicast broadcasting with MPEG Transport Stream content over UDP. Typically, such format was chosen in closed unloaded networks, where the probability of lost packets was minimized. For example, broadcasting from an encoder to a modulator at a head-end station (often within the same server rack), or IPTV broadcasting over a dedicated copper or fiber optic line with amplifiers and repeaters. This technology is used universally and demonstrates excellent latency. In our market, domestic companies achieved latency associated with encoding, data transfer and decoding using an Ethernet network of not more than 80 ms at 25 frames per second. With a higher frame rate this characteristic is even less.

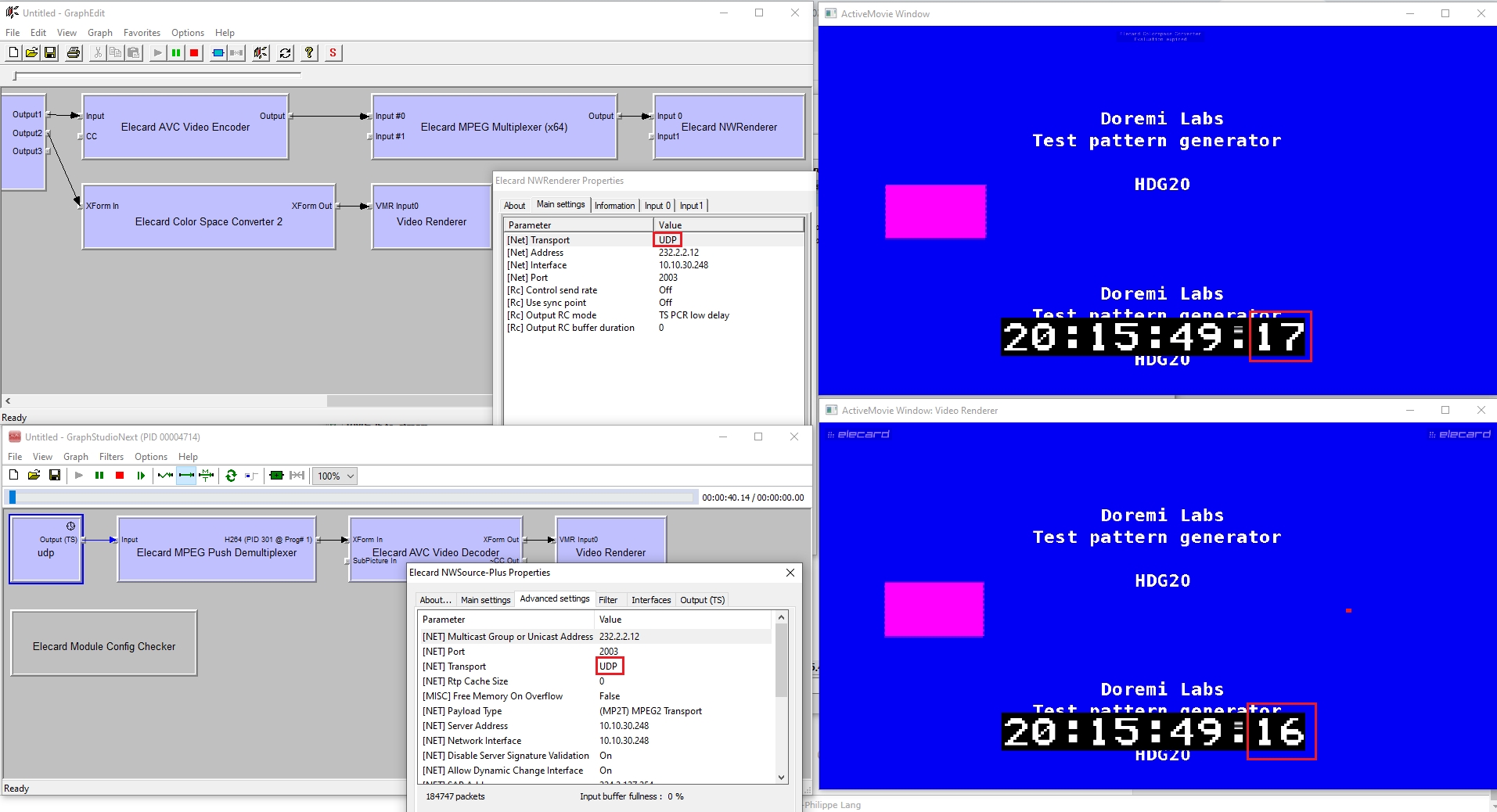

Figure 1. UDP broadcast latency measurement in a lab

The first picture shows a signal from a SDI capture card. The second picture illustrates a signal that has passed through the encoding, multiplexing, broadcasting, receiving and decoding stages. As you can see, the second signal arrives by one unit later (in this case, 1 frame, which is 40 ms, because there are 25 frames per second). A similar solution was used at the Confederations Cup 2017 and the FIFA World Cup 2018 with only a modulator, a distributed DVB-C network, and a TV as an end device added to the entire architectural chain. The total latency was 220–240 ms.

What if the signal passes through an external network? There are various problems to overcome: interference, shaping, traffic congestion channels, hardware errors, damaged cables, and software-level problems. In this case, not only low latency is required, but also retransmission of lost packets. In the case of UDP, Forward Error Correction technology with redundancy (with additional test traffic or overhead) does a good job. At the same time, the requirements as to network throughput rate inevitably increase and, consequently, so do latency and the level of redundancy depending on the expected percentage of lost packets. The percentage of packets recovered due to FEC is always limited and may vary significantly during transmission over open networks. Thus, in order to transfer large amounts of data reliably over long distances, it is necessary to add vast amounts of excess traffic to it.

TCP

Let’s consider technologies that are based on the TCP protocol (reliable delivery). If the checksum of the received packet does not match the expected value (set in the TCP packet header), then this packet is resent. And if the Selective Acknowledgment (SACK) specification is not supported on the client and server sides, then the entire chain of TCP packets is resent – from the lost packet to the last one received at a lower rate.

Previously, the TCP protocol was avoided when it came to low latency for live broadcasts, as latency increased due to error checking, packet resending, three-way handshake, "slow start" and prevention of channel overflow (TCP Slow Start and congestion avoidance phase). At the same time, the latency before the start of transmission, even with a wide channel, may reach five times the round-trip time (RTT), and an increase in throughput has very little effect on the latency.

Also, applications that broadcast using TCP do not have any control on the protocol itself (its timeouts, window sizes for re-broadcasting), since TCP transmission is implemented as a single continuous stream and before an error occurs the application may "freeze" for an indefinite period of time. And the higher level protocols do not have the ability to configure TCP to minimize broadcasting problems.

At the same time, there are protocols that work efficiently over UDP even in open networks and over long distances.

Let’s consider and compare various protocol implementations. Of the TCP-based protocols and data transfer formats, we note RTMP, HLS and CMAF, and of UDP-based protocols and data transfer formats, we note WebRTC and SRT.

RTMP

RTMP was a Macromedia proprietary protocol (now owned by Adobe) and was very popular when Flash-based applications were popular. It has several varieties supporting TLS/SSL encryption and even UDP-based variation, i.e. RTFMP (Real Time Media Flow Protocol, which is used for point-to-point connections). RTMP splits the stream into fragments which size can dynamically change. Inside the channel, packets that relate to audio and video may be interleaved and multiplexed.

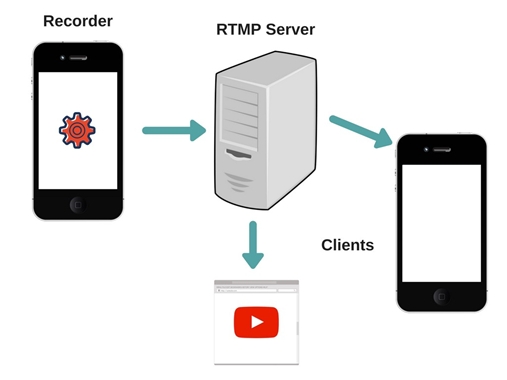

Figure 2. Example of RTMP broadcast implementation

RTMP forms several virtual channels on which audio, video, metadata, etc. are transmitted. Most CDNs no longer support RTMP as a protocol for distributing traffic to end clients. However, Nginx has its own RTMP module supporting the plain RTMP protocol, which runs on top of TCP and uses the default 1935 port. Nginx may act as a RTMP server and distribute the content that it receives from RTMP streamers. Also, RTMP is still a popular protocol for delivering traffic to CDNs, but in future traffic will be streamed using other protocols.

Today Flash technology is outdated and practically unsupported: browsers either reduce its support or completely block it. RTMP does not support HTML5 and does not work in browsers (playback is via Adobe Flash plugin). To bypass firewalls, they use RTMPT (encapsulating into HTTP requests and using standard 80/443 instead of 1935), but this significantly affects latency and redundancy (according to various estimates, RTT and overall latency increase by 30%). RTMP is still popular, for example, for broadcasting on YouTube or on social media (RTMPS for Facebook).

The key disadvantages of RTMP are the lack of HEVC/VP9/AV1 support and the limitation allowing two audio tracks only. Also, RTMP does not contain time stamps in packet headers. RTMP contains only labels calculated on the basis of the frame rate, so a decoder does not know exactly when to decode this stream. This necessitates that a receiving component evenly generates samples for decoding, so the buffer has to be increased by the size of the packet jitter.

Another RTMP problem is the resending of the lost TCP packets, which is described above. Acknowledgments of receipt (ACKs) do not go directly to the sender, in order to keep back traffic low. Only after receipt of the packet chain is a positive (ACKs) or negative (NACKs) acknowledgment sent to the broadcasting party.

According to various estimates, the latency in broadcasting using RTMP is at least two seconds with a full encoding path (RTMP encoder → RTMP server → RTMP client).

CMAF

Common Media Application Format (CMAF) is a protocol developed by an MPEG (moving picture experts group) commissioned by Apple and Microsoft for adaptive broadcasting (with an adaptive bit rate that changes based on changes in bandwidth rate of the entire network) over HTTP. Typically, Apple’s HTTP Live Streaming (HLS) used MPEG Transport Stream, while MPEG DASH used fragmented MP4. In July 2017, the CMAF specification was released. In CMAF, fragmented MP4 segments (ISOBMFF) are transmitted via HTTP with two different playlists for the same content intended for a specific player: iOS (HLS) or Android/Microsoft (MPEG DASH).

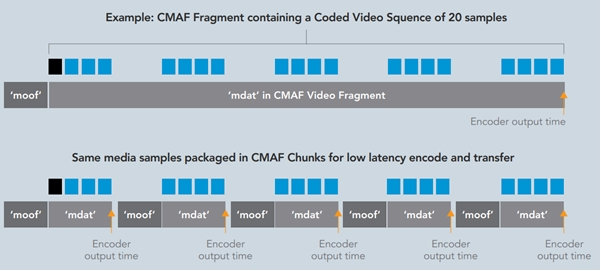

By default, CMAF (like HLS and MPEG DASH) is not designed for low-latency broadcasting. But attention to and interest in low latency is constantly growing, so some manufacturers offer an extension of the standard, for example Low Latency CMAF. This extension assumes that both the broadcasting and the receiving parties support two methods:

- Chunk encoding: dividing segments into sub-segments (small fragments with moof + mdat mp4 boxes, which ultimately make up a whole segment suitable for playback) and sending them before the entire segment is put together;

- Chunked Transfer Encoding: using HTTP 1.1 to send sub-segments to CDN (origin): only 1 HTTP POST request for the entire segment is sent per 4 seconds (25 frames per second) and henceforth 100 small fragments (one frame in each) may be sent within the same session. The player may also try to download incomplete segments, and the CDN, in turn, provides the finished part using Chunked transfer encoding and then maintains the connection until new fragments are added to the segment being downloaded. The transfer of the segment to the player will be completed as soon as the entire segment is formed (started) on the CDN side.

Figure 3. Standard and fragmented CMAF

To switch between profiles, buffering is required (minimum 2 seconds). Given this as well as potential delivery problems, the developers of the standard claim a potential latency of less than three seconds. Meanwhile, such killer features as scaling via CDN with thousands of simultaneous clients, encryption (together with Common Encryption support), HEVC and WebVTT (subtitles) support, guaranteed delivery and compatibility with different players (Apple/Microsoft) are maintained. Of the disadvantages, one may note obligatory LL CMAF support on the player’s side (support for fragmented segments and advanced operation with internal buffers). However, in case of incompatibility, the player may still work with content within the CMAF specification with a standard latency typical for HLS or DASH.

Low Latency HLS

In June 2019, Apple published a specification for Low Latency HLS.

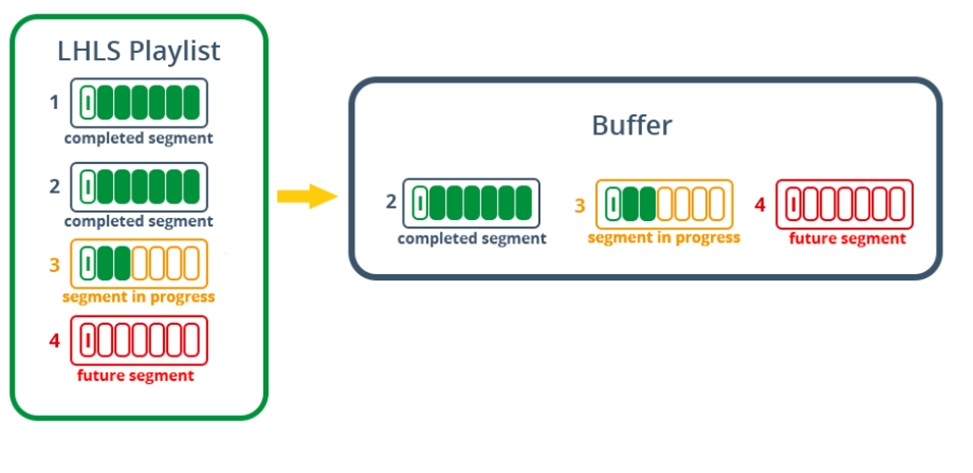

It consists of the following components:

- Generation of partial segments (fragmented MP4 or TS) with a minimum duration of up to 200 ms, which are available even before the completion of whole segment (chunk) consisting of such parts (x part). Outdated partial segments are regularly removed from a playlist.

- The server side may use HTTP/2 Push mode to send an updated playlist along with a new segment (or fragment). However, in the last revision of the specification of January 2020 this recommendation was excluded.

- Server’s responsibility is to hold the request (block) until a version of the Playlist that contains new segment is available. Blocking Playlist reload eliminates polling.

- Instead of the full playlist, the difference in the playlists (also known as a delta) is sent (the default playlist is saved and then only the incremental difference/delta – x skip – is sent when it appears, instead of sending the full playlist).

- The server announces the upcoming availability of new partial segments (preload hint).

- Information about playlists is loaded in parallel in adjacent profiles (rendition report) for faster switching.

Figure 4. Principle of LL HLS operation

The expected latency with the full support of this specification by CDN and the player is less than three seconds. HLS is very widely used for broadcasting in open networks due to its excellent scalability, encryption & adaptive bit rate support cross-platform functions and is backward compatible, which is useful if the player does not support LL HLS.

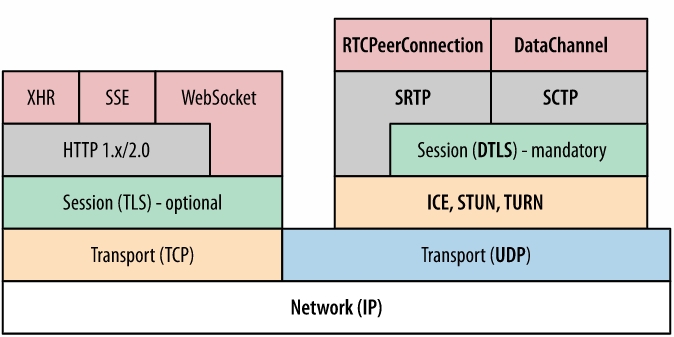

WebRTC

Web Real Time Communications (WebRTC) is an open source protocol developed by Google in 2011. It is used in Google Remote desktop, Google Duo, Google Meet, Zoom, Hangouts, Youtube live (вещание из браузера), Stadia cloud gaming. WebRTC is a set of standards, protocols and JavaScript programming interfaces that implements end-to-end encrypting due to DTLS-SRTP within a peer-to-peer connection. Moreover, the technology does not use third-party plugins or software, passing through firewalls without loss of quality and latency (for example, during video conferencing in browsers). When broadcasting a video, the WebRTC implementation over UDP is typically used.

The protocol works as follows: a host sends a connection request to a peer to be connected to. Until the connection between the peers is established, they communicate with each other through a third party – a signal server. Then, each of the peers approaches the STUN server with the query "Who am I?" (how to get to me from the outside?). At the same time, there are public Google STUN servers (for example, stun.l.google.com:19302). The STUN server provides a list of IPs and ports through which the current host can be reached. ICE candidates are formed from this list. The second side does the same. ICE candidates are exchanged via the signal server, and it is at this stage that a peer-to-peer connection is established, i.e. a peer-to-peer network is formed.

If a direct connection cannot be established, then a so-called TURN server acts as a relay/proxy server, which is also included in the list of ICE candidates.

SCTP (application data) and SRTP (audio and video data) protocols are responsible for multiplexing, sending, congestion control and reliable delivery. For the "handshake" exchange and further traffic encryption, DTLS is used.

Figure 5. WebRTC protocol stack

VP8, VP9, H.265, AV1 video codecs and Opus, G.711, iLBS, iSAC audio codecs are used. Maximum supported resolution: 4K, 60 frames per second.

A disadvantage of WebRTC technology in terms of security is the definition of a real IP even behind NAT and when using a Tor network or a proxy server. WebRTC is not intended for a large number of simultaneous peers for viewing (it is difficult to scale) due to the connections architecture, and also it is rarely supported by CDNs at the moment. WebRTC-based solutions of different vendors are almost always incompatible with each other, because they use their own realization. Finally, WebRTC is inferior to its colleagues in terms of the coding quality and maximum amount of transmitted data.

The latency claimed by Google is less than a second. Meanwhile, this protocol may be used not only for video conferencing, but, for example, for file transfer.

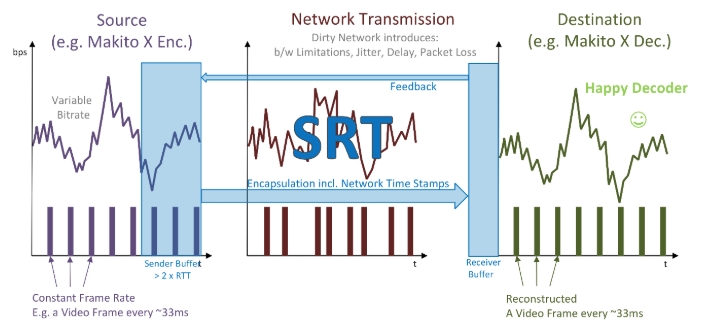

SRT

Secure Reliable Transport (SRT) is a protocol developed by Haivision in 2012. The protocol operates on the basis of UDT (UDP-based Data Transfer Protocol) and ARQ packet recovery technology. It supports AES-128 and AES-256 encryption. In addition to listener (server) mode, it supports caller (client) and rendezvous (when both sides initiate a connection) modes, which allows connections to be established through firewalls and NAT. The "handshake" process in SRT is performed within existing security policies, therefore external connections are allowed without opening permanent external ports in the firewall.

SRT contains timestamps inside each packet, which allows playing at a rate equal to the stream encoding rate without the need for large buffering, while aligning the jitter (constantly changing packet arrival rate) and the incoming bitrate. Unlike TCP, where the loss of one packet may cause resending of the entire chain of packets, starting with the lost one, SRT identifies a particular packet by its number and resends only this packet. This has a positive effect on latency and redundancy. The packet is resent with higher priority than standard broadcasting. Unlike the standard UDT, SRT has completely redesigned the architecture for resending packets to respond immediately as soon as the packet is lost. This technology is a variation of selective repeat/reject ARQ. It is worth noting that a specific lost packet may be resent only a fixed number of times. A sender skips a packet when the time on the packet is more than 125% of the total latency. SRT supports FEC, and users themselves decide which of these two technologies to use (or use both) to balance between the lowest latency an the highest reliability of delivery.

Figure 6. The principle of SRT operation in open networks

Data transmission in SRT may be bi-directional: both points may send data at the same time and may also act both as a listener (listener) and as the party initiating the connection (caller). Rendezvous mode may be used when both sides need to establish connection. The protocol has an internal multiplexing mechanism, which allows multiplexing several streams of one session into one connection using one UDP port. SRT is also suitable for fast file transfer, which was first introduced in UDT.

SRT has a network congestion control mechanism. Every 10 ms a sender receives the latest data on RTT (round-trip time) and its changes, available buffer size, packet receiving rate and approximate size of the current link. There are restrictions on the minimum delta between two packets sent in succession. If they cannot be delivered in time, they are removed from the queue.

The developers claim that the minimum latency that may be achieved with SRT is 120 ms with a minimum buffer for transmission over short distances in closed networks. The total latency recommended for stable broadcasting is 3–4 RTT. In addition, SRT handles delivery at long distances (several thousand kilometers) and high bitrates (10 Mbps and higher) better than its competitor RTMP.

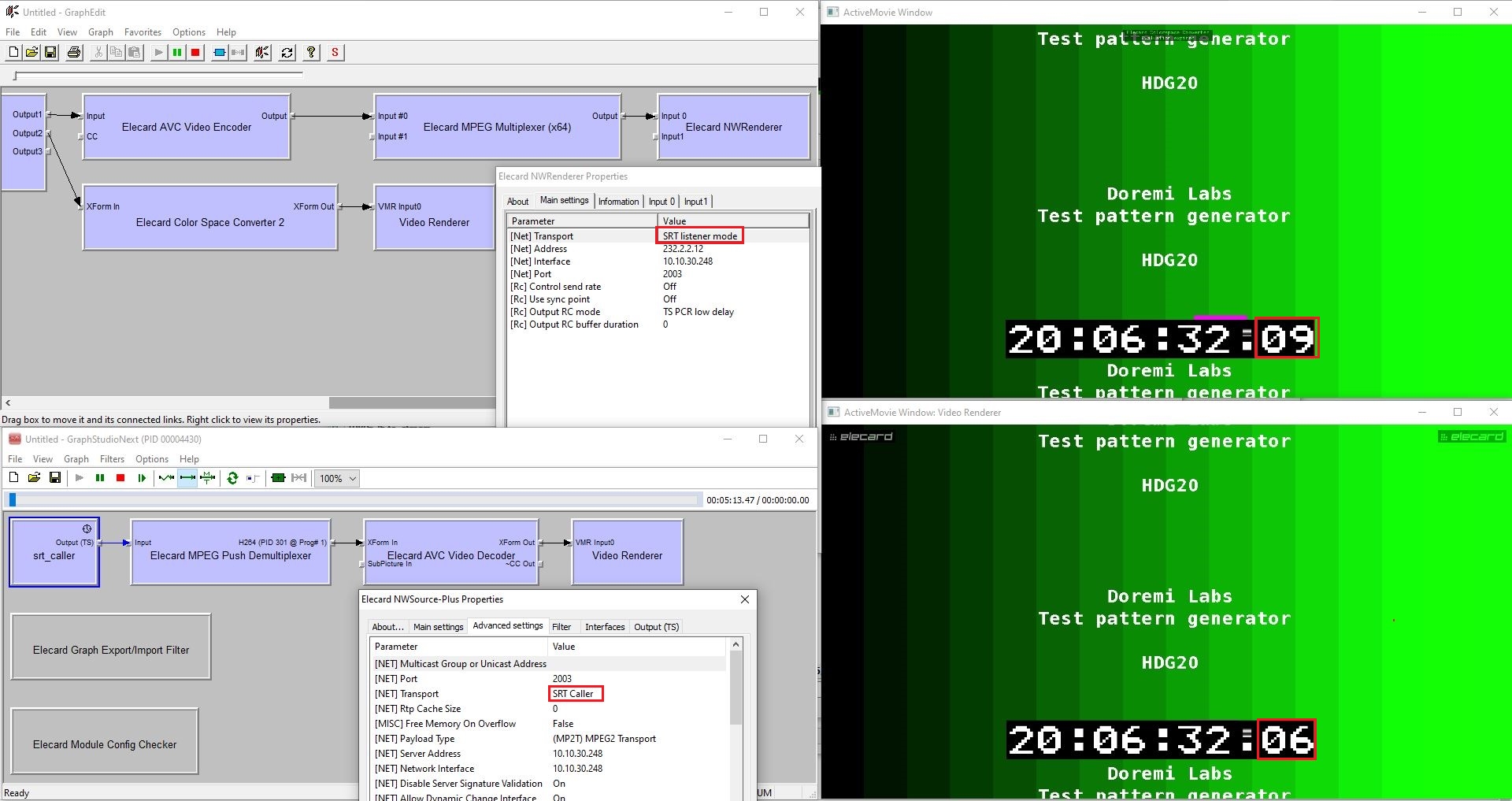

Figure 7. SRT broadcast latency measurement in a lab

In the example above, the laboratory-measured latency of SRT broadcasting is 3 frames at 25 frames per second. That is, 40 ms * 3 = 120 ms. From this we may conclude that ultra low latency at the level of 0.1 seconds, which may be achieved in UDP broadcasting, is also attainable during SRT broadcasting. SRT scalability is not at the same level as with HLS or DASH/CMAF, but SRT is strongly supported by CDNs and forwarders (restreamers), and also supports broadcasting directly to end clients through a media server in a listener mode.

In 2017, Haivision revealed the source code for SRT libraries and created the SRT Alliance, which comprises over 350 members.

Summary

As a summary, the following comparative table for protocols is provided:

| Protocol | RTMP | WebRTC | LL CMAF | LL HLS | SRT |

| Functions | |||||

| Latency | ≥ 2 sec | < 1 sec | ≥ 2,5 sec | ≥ 2,5 sec | ≥ 120 ms |

| Throughput capacity | Average | Average | High | High | High |

| Scalability | Low1 | Low | High | High | Medium |

| Cross-platform functions and support by manufacturers | Low2 | High3 | High | High | High |

| Adaptive bitrate | No | Yes | Yes | Yes | No |

| Encryption support | Yes | Yes | Yes | Yes | Yes |

| Ability to pass through firewalls and NAT | Low | Medium | High | High | Medium |

| Redundancy | Medium | Low | Medium | Medium | Low |

| Support for a wide range of codecs | No | Yes | Yes | Yes | Yes |

1 Not supported by CDNs for delivery to end-users. Supported for content streaming to the last mile, for example, to CDN or restreamer.

2 Not supported in browsers

3 Not available in Safari

Today, everything open-source and well-documented is quickly gaining popularity. It may be assumed that such formats as WebRTC and SRT have a long-term future in their respective spheres of application. In terms of minimum latency, these protocols already exceed adaptive broadcasting over HTTP, while at the same time maintaining reliable delivery, having low redundancy and supporting encryption (AES in SRT and DTLS/SRTP in WebRTC). Also, recently SRT’s "little brother" (according to the age of the protocol, but not in terms of functionality and capabilities), the RIST protocol, is gaining popularity, but this is a topic for a separate review. Meanwhile, RTMP is actively being squeezed out of the market by new competitors, and due to the lack of native support in browsers, it is unlikely to be widely used in the near future.

Request free demo of Elecard CodecWorks live encoder supporting RTMP, HLS, SRT protocols.

Author

Author

Vitaly Suturikhin

Head of Integration and Technical Support Department, Elecard

June 4, 2020